All Categories

Featured

Table of Contents

Such designs are trained, utilizing millions of examples, to forecast whether a particular X-ray reveals indications of a growth or if a specific borrower is most likely to fail on a finance. Generative AI can be taken a machine-learning version that is trained to create new data, instead than making a forecast about a details dataset.

"When it involves the real machinery underlying generative AI and other types of AI, the differences can be a little bit blurry. Sometimes, the very same algorithms can be used for both," states Phillip Isola, an associate professor of electric engineering and computer technology at MIT, and a member of the Computer Science and Artificial Intelligence Lab (CSAIL).

.png)

One big difference is that ChatGPT is far bigger and much more complex, with billions of criteria. And it has been educated on a massive amount of information in this case, a lot of the openly offered text on the web. In this big corpus of message, words and sentences show up in turn with certain dependencies.

It finds out the patterns of these blocks of text and uses this expertise to suggest what might follow. While larger datasets are one stimulant that brought about the generative AI boom, a range of significant study advances also caused even more intricate deep-learning designs. In 2014, a machine-learning style called a generative adversarial network (GAN) was recommended by researchers at the University of Montreal.

The generator tries to deceive the discriminator, and while doing so discovers to make more reasonable outputs. The photo generator StyleGAN is based on these kinds of designs. Diffusion designs were introduced a year later by researchers at Stanford College and the College of California at Berkeley. By iteratively fine-tuning their output, these designs learn to generate brand-new information examples that look like samples in a training dataset, and have been utilized to produce realistic-looking images.

These are only a few of many strategies that can be used for generative AI. What every one of these strategies share is that they transform inputs right into a collection of symbols, which are mathematical representations of portions of data. As long as your information can be transformed right into this standard, token style, then in theory, you can use these techniques to generate brand-new data that look comparable.

Ai-driven Recommendations

While generative designs can attain extraordinary outcomes, they aren't the ideal option for all types of information. For jobs that involve making predictions on structured information, like the tabular data in a spreadsheet, generative AI designs often tend to be outmatched by conventional machine-learning techniques, claims Devavrat Shah, the Andrew and Erna Viterbi Teacher in Electrical Design and Computer Technology at MIT and a participant of IDSS and of the Research laboratory for Information and Choice Solutions.

%20(11).png)

Previously, people needed to talk with makers in the language of makers to make things occur (What are AI’s applications?). Currently, this interface has actually determined exactly how to chat to both people and machines," states Shah. Generative AI chatbots are currently being utilized in call centers to field questions from human consumers, yet this application highlights one possible red flag of applying these designs worker displacement

Computer Vision Technology

One encouraging future instructions Isola sees for generative AI is its usage for construction. Rather of having a model make a photo of a chair, perhaps it can produce a plan for a chair that might be created. He also sees future uses for generative AI systems in developing extra typically smart AI agents.

We have the ability to believe and dream in our heads, ahead up with intriguing ideas or plans, and I think generative AI is one of the devices that will certainly empower agents to do that, too," Isola states.

Ai-driven Recommendations

2 extra current advancements that will certainly be reviewed in even more information below have actually played a critical part in generative AI going mainstream: transformers and the innovation language versions they made it possible for. Transformers are a kind of device discovering that made it feasible for researchers to train ever-larger models without needing to identify every one of the information beforehand.

This is the basis for devices like Dall-E that instantly create photos from a text summary or create text inscriptions from images. These developments notwithstanding, we are still in the early days of making use of generative AI to create understandable text and photorealistic stylized graphics.

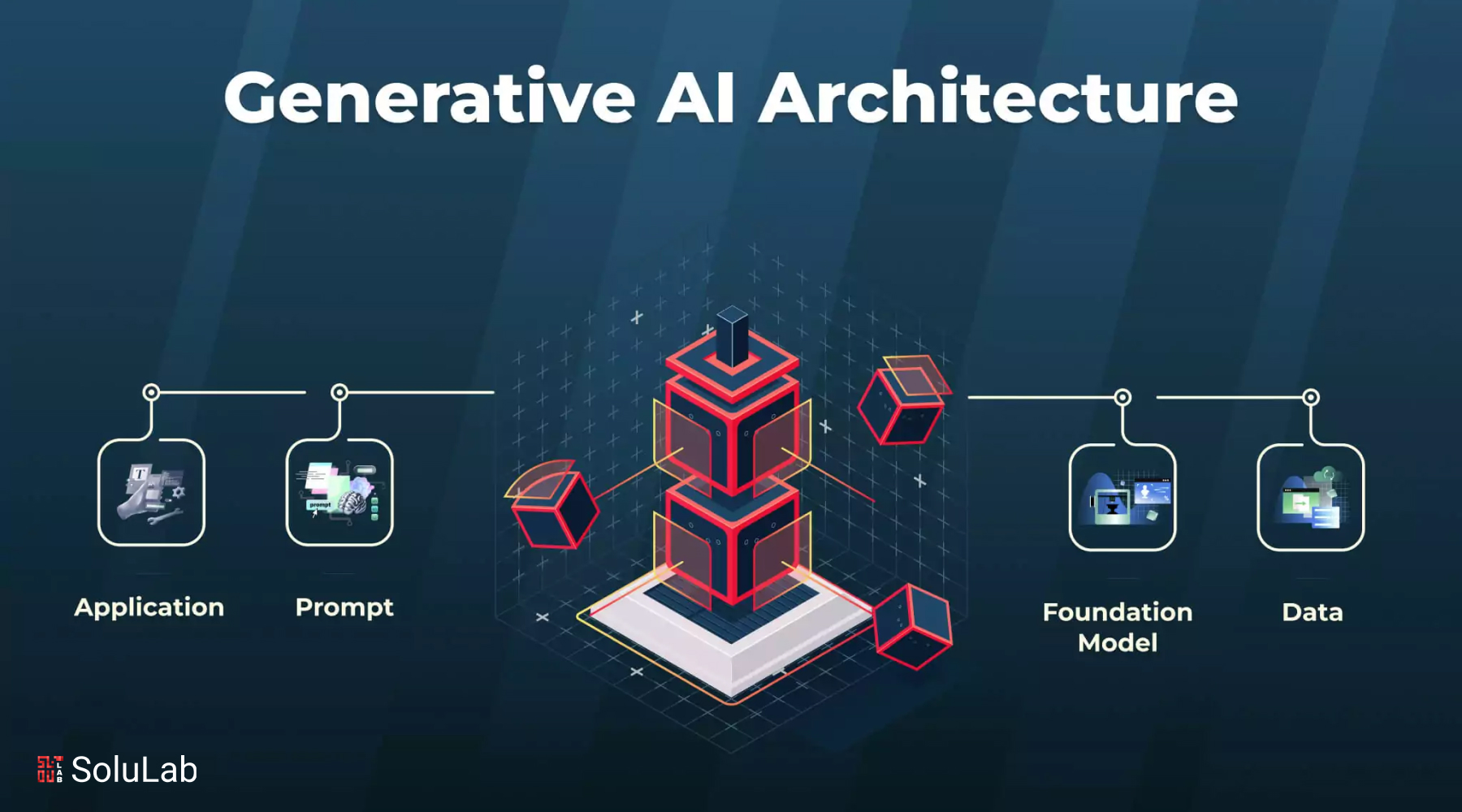

Moving forward, this modern technology can assist compose code, style brand-new medicines, develop products, redesign organization processes and transform supply chains. Generative AI starts with a punctual that might be in the kind of a text, a photo, a video clip, a layout, musical notes, or any kind of input that the AI system can process.

After a preliminary feedback, you can also personalize the results with comments concerning the design, tone and various other components you desire the produced material to mirror. Generative AI models integrate various AI formulas to stand for and refine web content. As an example, to generate text, various natural language processing strategies transform raw personalities (e.g., letters, punctuation and words) right into sentences, parts of speech, entities and actions, which are stood for as vectors using multiple encoding strategies. Researchers have actually been producing AI and various other devices for programmatically producing web content because the early days of AI. The earliest strategies, referred to as rule-based systems and later on as "expert systems," made use of explicitly crafted rules for producing actions or data sets. Semantic networks, which form the basis of much of the AI and machine knowing applications today, flipped the issue around.

Created in the 1950s and 1960s, the initial semantic networks were restricted by an absence of computational power and small information sets. It was not till the advent of huge data in the mid-2000s and enhancements in hardware that semantic networks ended up being practical for producing material. The area accelerated when researchers found a way to obtain semantic networks to run in parallel across the graphics refining devices (GPUs) that were being made use of in the computer system pc gaming market to render video games.

ChatGPT, Dall-E and Gemini (formerly Bard) are preferred generative AI interfaces. In this instance, it attaches the significance of words to visual aspects.

What Is The Difference Between Ai And Ml?

Dall-E 2, a second, extra qualified version, was released in 2022. It makes it possible for customers to create images in multiple styles driven by individual triggers. ChatGPT. The AI-powered chatbot that took the globe by tornado in November 2022 was improved OpenAI's GPT-3.5 application. OpenAI has actually given a means to connect and tweak message actions via a chat interface with interactive responses.

GPT-4 was launched March 14, 2023. ChatGPT incorporates the background of its conversation with a customer right into its outcomes, imitating a genuine conversation. After the extraordinary popularity of the new GPT user interface, Microsoft announced a significant brand-new investment into OpenAI and integrated a version of GPT into its Bing search engine.

Table of Contents

Latest Posts

How Do Autonomous Vehicles Use Ai?

Quantum Computing And Ai

Is Ai The Future?

More

Latest Posts

How Do Autonomous Vehicles Use Ai?

Quantum Computing And Ai

Is Ai The Future?